Papago, an app that ‘learns’ to perfectly translate

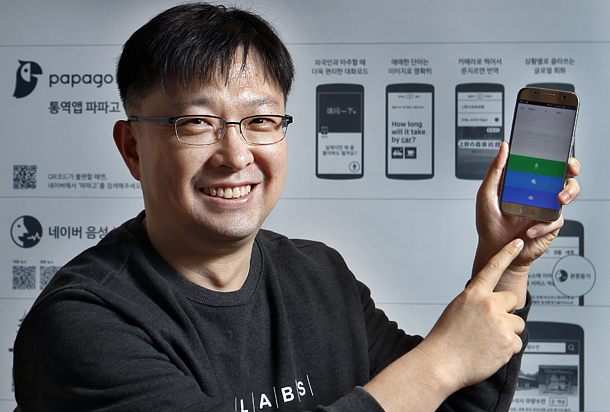

Kim Jun-seok, leader of the Papago development team at Naver Labs, holds up his phone with the Papago app at Naver’s headquarters in Seongnam, Gyeonggi. After earning a master’s degree in natural language processing from Pohang University of Science and Technology in Pohang, North Gyeongsang, he started working at LG Electronics in 2001 and spent six years doing voice recognition research. After joining Naver in 2007, Kim worked on search modeling and voice recognition and relocated to Naver Labs, a separate unit, to develop machine translation and the Papago project. [PARK SANG-MOON]

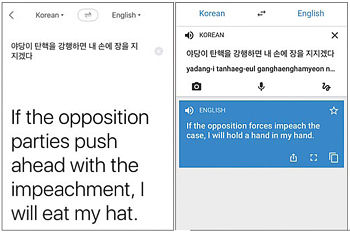

Many memes were related to results that different translation apps gave for his traditional Korean expression “jangeul jijida,” which literally means “boiling soy sauce in one’s hand while lighting a fire under it.”

While Google’s translation tool awkwardly interpreted the phrase as “hold my hand in my hand,” Papago, an app by Naver, the top search engine in Korea, showed the English expression “eat my hat.”

It may just be one example, but the fluent result from Papago was an unexpected surprise, given its status as an app from a portal site operator whose brand recognition and global standing is perceived as far behind the artificial-intelligence giant Google.

Papago’s translation result for jangeul jijida, left, compared to Google Translate’s version. [SCREEN CAPTURE]

Studies show NMT produces more accurate translations that are more natural in syntax and grammar than SMT and better reflect the way people actually speak.

Naver released the Papago app in August, just eight months after researchers at its Naver Labs unit embarked on the project. Three months later, Google revamped its 10-year-old Google Translate app, switching it from SMT to NMT for English, French, German, Spanish, Portuguese, Chinese, Japanese, Korean and Turkish.

Kim Jun-seok, leader of the Papago development team at Naver Labs, said internal evaluations showed the quality of Papago’s service was 60 out of 100 for Korean-English and English-Korean translation. That means the app still makes significant errors that a human translator would never make. Kim believes the score needs to get as high as 80 to actually satisfy users.

“We still have 20 more to go,” the developer said during a recent interview at Naver’s headquarters in Seongnam, Gyeonggi, “but since NMT has leapt past decades-old SMT in such a short span of time, it won’t be long before Papago reaches an even higher level.”

The app, available on both Android and iOS, can translate typed text as well as speech and can optically recognize characters in a photograph, features that Google’s web and mobile translation service provides. The company says Papago will support six more languages - traditional Chinese, Spanish, French, Indonesia, Thai and Vietnamese - by next year.

Unlike Google, Papago can detect whether a Korean speaker is asking a question or not by identifying the subtle rise in intonation. In Korean, some interrogative sentences have no change in word order and can only be inferred by tone.

Kim said there were many other differences between the SMT and NMT.

SMT, also known as phrase-based machine translation, works by dissecting each sentence fragment into words and phrases. The tool then looks them up in a large vocabulary pool that is derived statistically and then rearranges them to make sense. This means translations that came up most frequently in the past are the ones that get picked.

NMT, on the other hand, uses an artificially-intelligent neural network to work on whole sentences at once and figure out the best translation. The system is modeled on the human brain and can learn from past actions to solve new problems without being programmed to do so. That means it can translate languages on which it has not even been trained and improve over time.

The system can also handle multiple language pairs. If the network has been taught to translate between English and Chinese and between English and Korean, it can also translate between Chinese and Korean without going through English.

For the deep-learning computer network to get brainier over time, it needs a vast database to study. It’s the same way Google DeepMind’s AlphaGo “learned” how to play the game of Go by analyzing a huge database of professional Go matches.

Kim’s Papago team, which collaborates with language-processing analysts and translators under the Naver umbrella, is still figuring out a way to produce the best translation results by applying various learning methods and adding databases every 15 days to a month. For this reason, a translation of the same sentence can differ depending on when you enter it.

Papago has been making the best use of Naver’s ample database: sentences from Naver’s web dictionaries, webtoons (comic strips) serviced on its site and the portal’s Q&A section called “KnowledgeiN,” which runs similarly to Quora. Kim’s team even purchased multilingual technical manuals for automobiles and consumer electronics products to facilitate Papago’s machine-learning process. The webtoons help the tool improve its fluency in colloquial language.

“From the initial stage of its development, Papago was supposed to help people in real-life situations such as travel and shopping,” he said. “It would be best if Papago becomes a must-download app for foreigners visiting Korea who have no knowledge of Korean.”

Papago is part of Naver’s big-picture efforts to prepare for a future dominated by artificially intelligent services under its so-called ambient intelligence vision. It refers to a future where consumer electronics, telecommunications and computing create environments that are sensitive and responsive to the presence and situation of users.

Under this vision, Naver has been pursuing “Project Blue,” which encompasses robotics, voice assistance, its AI-based Whale Web browser as well as autopilot technology, with the hopes of organically connecting all of them with each other.

Propped up by the global success of its mobile messaging app Line, Naver is now gearing up to branch out from being the nation’s top portal site to become a leading tech firm in the global arena.

In a related effort, Naver is spinning off Naver Labs in January, pumping in 120 billion won ($100 million) to make it an independent research subsidiary that can be fully dedicated to securing and developing core technologies for Naver’s future growth.

Kim says Papago is trying to tackle challenges with optical character recognition, as their specific feature lags much behind Google’s. His team only began developing it when the Papago project started, whereas Naver had already been equipped with advanced technologies in speech recognition and synthesis since 2011 and 2013, respectively.

There are numerous obstacles to recognizing characters in an image, he said. “There are so many different fonts out there, and the varying space between letters, the quality of lighting and the angle at the point of the photo shooting hampers accurate processing.

“We humans naturally see characters in an image that the computer can’t yet.”

Will the rapid pace of translation apps’ evolution deprive human translators of their occupation? Not for the time being, Kim said. “Papago may be able to do some rough draft or conversation translations, but literature and video translation still need humans because it requires cultural understanding and there’s too much risk should the outcome turn out to be wrong.”

BY SEO JI-EUN [seo.jieun@joongang.co.kr]

with the Korea JoongAng Daily

To write comments, please log in to one of the accounts.

Standards Board Policy (0/250자)