Machines haven't taken over yet, and that angers some

The snowball got rolling when a politician sent a text in which he complained that the headlines on Daum, which is owned by Kakao, are biased. The text was photographed by a journalist.

“Please lodge a strong complaint to Kakao about this,” Rep. Yoon Young-chan of the ruling Democratic Party wrote to an aide. “Kakao is being unreasonable. Tell them to come.”

The implication is that Yoon, who happens to have a background in technology, feels as though he can influence the company in terms of what news it puts on its splash page.

Naver and Daum claim that the news on their landing pages is chosen by computer. They say they use algorithms and that humans do not interfere. But neither has published details about how their programs make the selections.

As true artificial intelligence (AI) doesn't exist yet, real-time human input is often part of the calculus, with companies following the fake-it-until-you-make-it principle.

Yoon has apologized, but suspicion about the integrity of the programs remains.

Naver claims its algorithm has been choosing all of the news articles on the main page since last year. Daum says it has been using machines for five years to do the same.

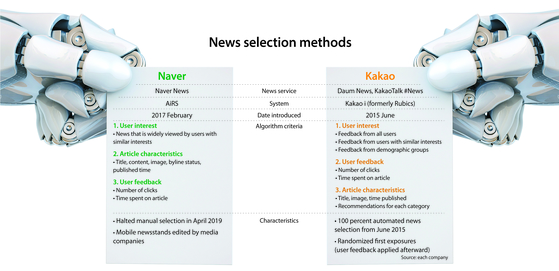

In June 2015, Kakao implemented Rubics — an AI news selection service that recommends articles based on real-time user activity. Naver started utilizing the AiRS news algorithm in February 2017. Aside from the pages directly managed by media companies, the general news selection is driven by the software, Naver claims. Before the implementation of AiRS, Naver employees selected news items for the main page.

Software caters to user taste

“DP Chairman Lee Nak-yon’s speech was not on Daum's main page. That was important news, and yet it wasn't there, so I wondered why,” Yoon told the National Assembly’s Science, ICT, Broadcasting and Communications Committee on Tuesday.

As pages are customized for each user, news of the speech may have been on the pages of others even if it did not show up on Yoon's Daum splash page. For people who have accounts with the site, their reading and search history guides the choice of articles.

Daum's own records indicate that the speech did show up on the landing page, but it is possible that Yoon's browsing history resulted in the speech being left off that page.

For some, this is not the point at all. The problem for them is that Yoon thought he could influence news choice and that his confidence in the matter suggests that the tech companies would comply.

Are portals “irresponsibly” imputing the algorithm?

Critics say the tech companies should consider revealing the algorithms behind the news selection process. It is not enough to simply say that the program is working properly, they argue. They need to prove that assertion by allowing for an inspection of the code.

“It is wrong to say that the news selection by an AI algorithm is unbiased without analyzing how it makes such judgments,” Lee Jae-woong, Daum’s founder, said in a Facebook post on Tuesday. “The AI’s standards of value judgment and how it edits the news section should be revealed.”

He left the company in 2008.

Others question whether bias can be programmed in. Algorithms are written by people, and it cannot be claimed that their views are not reflected in the code until it has been reviewed.

“AI functions in ways that are built by humans, and hence it is ideal for portals to confirm their neutrality with the help of external algorithm review committees,” said Kim Seung-joo, a professor at Korea University School of Cybersecurity.

Rhee June-woong, a professor at Seoul National University's department of communication, disagrees: “The purpose of Naver and Daum’s algorithm is to maximize the time spent on their web page using the characteristics of their user base. Hence their AI news selection should be irrelevant to the discussion of neutrality.”

BY HA SUN-YOUNG, KIM JUNG-MIN [lee.jeeyoung1@joongang.co.kr]

with the Korea JoongAng Daily

To write comments, please log in to one of the accounts.

Standards Board Policy (0/250자)